Weekly Highlights: Chatbots for everything, the commoditization of AI and why chat probably isn’t the end game

A rundown of three most important developments in AI this week ending 3/3/23

Hi Hitchhikers!

First of all, a massive welcome to the 100(!) new subscribers that hitched a ride with us this week! I’m experimenting with a new format for my highlights post this week to focus on just the three most critical updates that happened in AI and why they matter. There’s a lot happening in the AI every week but I’m hoping this format will help you cut through the noise and stay up to date with the most important news. I still plan to write deeper dives into specific topics once or twice a month, as well as publish more podcast episodes, but this weekly update will continue to be where you can follow my perspectives on AI on a regular basis.

In this week’s update:

Why OpenAI’s new ChatGPT API will unlock a wave of new AI products.

How cost reductions and open-source availability will quickly commoditize foundational large-scale language models.

Chat is AI’s equivalent of the Command Line Interface and what that means for how we will interact with AI in the future.

Before we continue don’t forget to hit subscribe if you’re new to AI and want to learn more about the space.

OpenAI’s ChatGPT API will unlock a wave of new AI chatbots in our lives

On Wednesday, OpenAI launched their much-awaited API for ChatGPT:

This announcement is likely to be pivotal for the AI ecosystem for a number of reasons:

1. A chat-based API with equivalent or better perfomance to GPT

First of all the ChatGPT API uses the latest version of OpenAI’s large-scale language model GPT-3.5-turbo1, which OpenAI claims is equivalent to or better than the flagship version of GPT available over their API, text-davinci-003, often referred to as “GPT-3.5”. When ChatGPT launched last November, many developers pointed out that it performed better than GPT, especially in conversational settings e.g. in Chatbot apps, due to the additional fine-tuning that OpenAI performed using reinforcement learning from human feedback2. That conversational aspect is what really sets the new ChatGPT API apart from the existing GPT-3.5 API a according to OpenAI’s announcement:

“Traditionally, GPT models consume unstructured text, which is represented to the model as a sequence of “tokens.” ChatGPT models instead consume a sequence of messages together with metadata.”

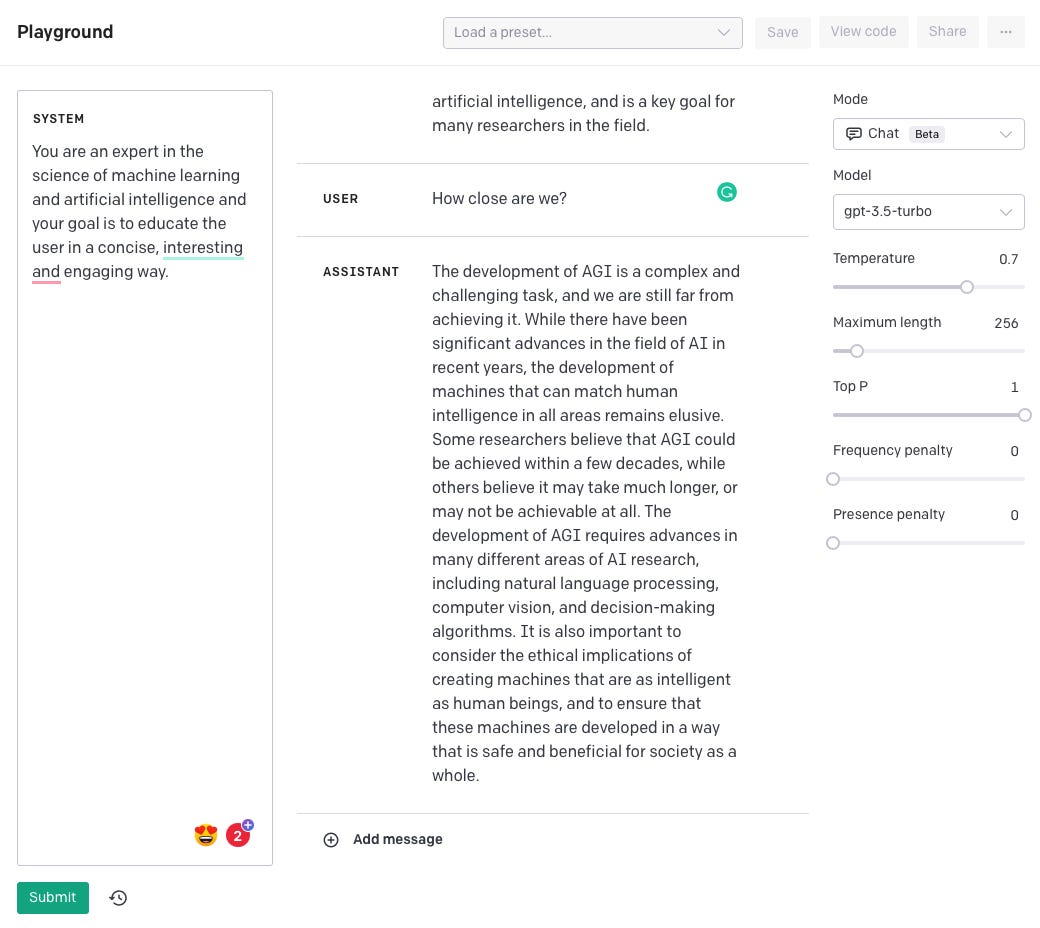

What this means is that, unlike the GPT which takes a simple text prompt as it’s input, which it then completes, ChatGPT takes a conversation as input, between a “user” and an “assistant” with a given “system” context. ChatGPT then continues the assistant part of that conversation. Here’s an example I created using OpenAI’s Playground, an interactive version of their API, to illustrate this concept:

In the example, I told the System it was as an expert in AI, added the User prompt, “What is AGI?” and pressed the Submit button. The API then returned a response as the Assistant that explained what AGI is. When I added another User message “How close are we?” and pressed Submit again, the API then generated another response from the Assistant:

As you can see the API is now using the whole conversation context to respond, not just the isolated question “How close are we?” which would be ambiguous otherwise. The ability to provide conversations with context immediately lowers the barrier to building AI chatbots. Whereas previously developers would have to write a single prompt that describes the whole prior conversation and context, now OpenAI is doing this all behind the scenes, which means developers can focus more on building their chat interface and integrating any additional data relevant to their product rather than elaborate prompt engineering.

2. 1/10 price of the previous API

The second reason the announcement impressed and surprised the AI community was that OpenAI is making the ChatGPT API available at 1/10th the price of their existing GPT API. I can’t think of a recent example where a platform has launched a new version of its product at a 90% discount. This is a big move by OpenAI and there’s lots of speculation on both how and why they did it. For the how part Open AI shared little detail:

"Through a series of system-wide optimizations, we’ve achieved 90% cost reduction for ChatGPT since December; we’re now passing through those savings to API users."

But, I’m most interested in the why part because OpenAI today has total pricing power for their API due to lack of competition and could have taken all their cost savings as profit. So what is OpenAI's motivation behind this massive price drop? Chamath Palahapitiyah shared a theory for OpenAI’s pricing strategy on the All-In podcast this week3, which I found convincing:

“The way that it could become a trillion dollar company is by cutting the cost to a low degree that no one can effectively compete with it… That’s how they could become very very large in valuation, would be to become so pervasively relied upon where they take such a minuscule take rate in you building a company. That could be really effective for them”

OpenAI applying lowering pricing is a smart move in this case because they will both accelerate the AI developer ecosystem and at the same time capture the majority of market share before their competitors even come to the table with an alternative. Remember also that OpenAI’s long-term goal is to achieve Artificial General Intelligence and the revenue from their APIs could become a significant funding source for achieving that goal in the future.

Regardless of OpenAI’s motivation, for developers, this is a huge boon and yet another lowering of the barrier to entry to building AI applications: One of the common complaints about building on top of OpenAI’s platform was the cost of using their models once a product scales to millions of users becoming prohibitively expensive. With this price reduction, developers are much less likely to try and develop their models or use open-source ones to save money.

3. API already being used by major consumer tech companies

The third noteworthy part of the announcement was OpenAI shared that Snap, Instacart, and Shopify have already developed chatbots using the new ChatGPT API, instantly increasing AI's reach to hundreds of millions more users:

“Snap Inc., the creator of Snapchat, introduced My AI for Snapchat+ this week. The experimental feature is running on ChatGPT API. My AI offers Snapchatters a friendly, customizable chatbot at their fingertips that offers recommendations, and can even write a haiku for friends in seconds. Snapchat, where communication and messaging is a daily behavior, has 750 million monthly Snapchatters.”

This is significant because the common consensus is that when there is a new disruptive technology, incumbents are disadvantaged over startups who can move fast to adopt and innovate the new platform. Clayton Christensen’s Innovators Dilemma talks about a classic example of this in the disk drive industry:

In the 1980s, established disk drive manufacturers like IBM and Seagate dominated the industry with their large, expensive drives that were designed for mainframe computers. However, smaller startups like Conner Peripherals and Quantum Corporation began to develop smaller, cheaper drives that were more suitable for the emerging personal computer market.

Initially, the established companies ignored these smaller drives, seeing them as inferior to their own products. However, as the PC market grew, the smaller drives gained popularity and began to improve rapidly in terms of capacity and performance. The established companies were slow to respond and were eventually overtaken by the startups, who were able to innovate faster and adapt more quickly to the changing market.

With AI, however, we’re seeing incumbent technology companies like Microsoft, Snap, Spotify, and Shopify move quickly to adopt the technology which again speaks to the fact that the barriers to entry in AI are much lower than we might have expected. The corollary of this is that if you’re a startup thinking about using AI to disrupt an existing market with strong incumbents, it’s not a given that you will be able to move faster than them!

The culmination of all of this points to one obvious trend we will see in the next few months: An invasion of chatbots into our day to day lives both from new startups and existing products moving quickly to integrate AI. Expect to see chatbots for booking travel, ordering food, buying clothes, scheduling your calendar, planning your workouts, etc.

The question on my mind is: Are we going to be speechless a year from now after the chatbot bubble pops?!

Race to the bottom: How foundational models will quickly become commoditized

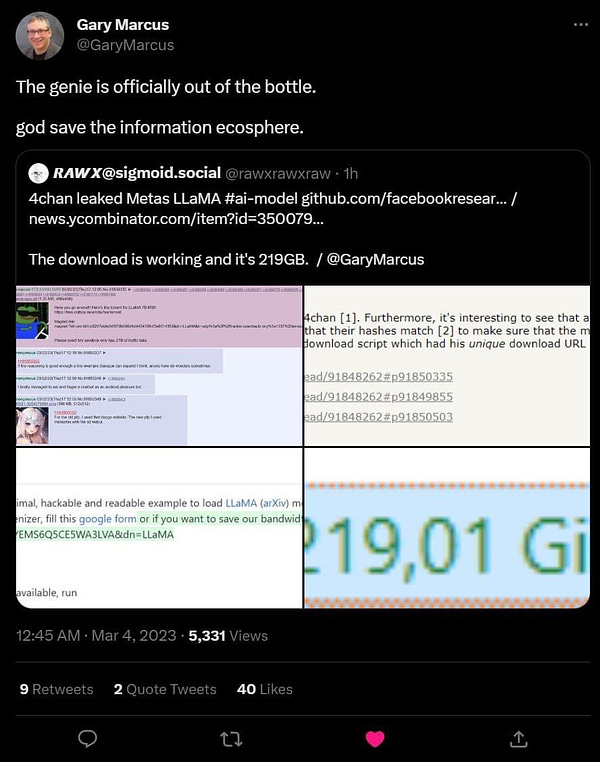

Besides OpenAI slashing its API pricing, another important thing that got far less attention this week: Meta’s “open source” large-language model LLaMA, which was supposed to only be available to researchers, was leaked on 4chan to the world:

What was leaked was not the model itself but the weights of the model. Weights in a model are like ingredients in a cooking recipe, that to be combined in the perfect proportions to recreate a model that is equivalent to Meta’s original one. It’s only a matter of time before these weights make their way into an actual open-source model and is then uploaded to Huggingface, a website for sharing open-source models with other developers. There’s not much Meta can do about this either because it’s unlikely the weights in a neural network are even protected under copyright, especially given the dubious circumstances under which Meta released the model in the first place. At the same time, StabiltyAI, the co-creator of Stable Diffusion, is also rumored to be working on their own open-source large-scale language model to compete with OpenAI’s GPT.

All this suggests that that open-source will quickly catch up with proprietary models very soon. In the next 6 months, I expect we will see a dozen or so foundational large-scale language models emerge both proprietary and open-source to compete with OpenAI. At that point, there will be real downward pressure on pricing, and perhaps that’s what OpenAI was preempting by reducing pricing for ChatGPT now. With $10B in funding secured from Microsoft, I fully expect OpenAI to continue reducing pricing further to compete, inevitably creating a race to the bottom.

We’ve seen commoditization like this happen in previous technology waves, including in the PC, smartphone, and cloud computing industries. In all of those cases, an initial player dominated the market and then as the market became more competitive, prices dropped significantly, and the technology's availability rapidly expanded. In this case, I think cloud computing is the closest analogy to foundational models in AI. Amazon Web Services dominated the market in the cloud at the beginning much like OpenAI dominates foundational models today.

Like Jeff Bezos's strategy, it is likely OpenAI will continue to use “cost-plus” pricing. This will both allow them to capture market share quickly and more importantly accelerate the reach of AI to as many people as possible by causing the market itself to expand.

Chat is the Command Line Interface of AI

With the impending proliferation of chatbots, it’s easy to assume that we are destined to interact with AI with the tapping of our fingers for the foreseeable future. I believe that won’t be the case, and it’s easy to see why if we again look at the past technology waves as an indicator for what’s to come. When PCs became popular, Microsoft’s MS-DOS was the de-facto operating system. Users had to type in commands via a command line interface or CLI to execute their tasks. At the time, this probably felt novel for people using PCs compared to say using a calculator where you can only type in numbers! Just as MS-DOS played a crucial role in making personal computers more accessible and user-friendly at the time, chatbots are making AI more accessible and user-friendly for a broader range of people now.

Our time using MS-DOS on PCs was short-lived however, with the dawn of the Graphical User Interface, famously pioneered by the Xerox PARCand brought into production by Steve Jobs in Apple’s Macintosh computer. Steve’s genius was to realize that pointing and clicking on things with a mouse was a far better user experience than typing in commands into a CLI. In a similar vein, I expect that someone will come up with a far better way to interface with AI than a chatbox. Maybe it will be with voice, like in the movie Her, although I’m a little skeptical given our misadventures with Voice Assistants over the last decade. It could even be AI reading our minds, like in an example published this week by researchers who were able to use Stable Diffusion to recreate images from people’s minds via MRI output:

What’s more interesting to me though is how the command line interface became the de facto interface for developers Ava still is, long after the GUI emerged. That implies that the chatbot experience we are using today could become the default form of programming for AI developers. OpenAI hinted at this in their announcement with the introduction of a new syntax they call “Chat Markup Language” for inputting conversations into the ChatGPT API. In their documentation for ChatML they share the following:

ChatML documents consist of a sequence of messages. Each message contains a header (which today consists of who said it, but in the future will contain other metadata) and contents (which today is a text payload, but in the future will contain other datatypes).

Andrej Karpathy, a leading AI researcher who previously led the self-driving car division at Tesla and has now returned to OpenAI talks about this new type of programming in a recent tweet:

So, while we may not have discovered the killer interface for AI, we may have inadvertently found the killer programming language for AI: Just chatting to it.

That’s a wrap for this week folks. Please let me know if you like this new format for weekly updates!

GPT stands for "Generative Pre-trained Transformer" and it is a type of language model developed by OpenAI. GPT models are designed to generate human-like text by predicting the likelihood of the next word in a given sequence of words.

The GPT models are pre-trained on large amounts of text data from the internet, which helps them learn patterns and relationships between words and sentences. Once trained, these models can be fine-tuned for specific tasks, such as text classification, language translation, or generating text for chatbots.

OpenAI's latest version of GPT, GPT-3, is currently one of the largest and most powerful language models available, with billions of parameters. GPT-3 has shown impressive performance on a range of natural language processing tasks, including language translation, question answering, and text generation.

Learn more about large-scale language models in my deep dive into deep learning part 3.

Reinforcement Learning from Human Feedback (RLHF) is a type of machine learning approach that uses reinforcement learning (RL) principles in combination with human feedback to train AI systems. In RLHF, a machine learning model is trained to take actions in an environment in order to maximize a reward signal. The model receives feedback in the form of rewards or penalties for its actions, which it uses to adjust its behavior and improve its performance over time. This is how ChatGPT became so good at conversation.

Go to minute 25 to hear the conversation on OpenAI in the All-In podcast: