🗞️AI highlights from this week (1/20/23)

Predictions for an AI filled future, Google shares AI progress, debunking GPT-4 rumors and more…

Hi readers!

Here are some of the most interesting AI updates that I read in the last week.

P.S. Don’t forget to hit subscribe if you’re new to AI and want to learn more about the space.

Highlights

1/ Founder of StabilityAI shares predictions for AI-filled future

Ehmad Mostaque the founder of StabilityAI, the company behind Stable Diffusion, shared an inspiring view of how AI will impact our future with Peter Diamandis, host of the Moonshots Mindset podcast. In the wide ranging discussion, Ehmad covered how AI will impact the entertainment industry, what Generative AI means for copyright and ownership and whether AI should have a moral compass.

“This is one of the biggest evolution for humanity ever!” - Ehmad Mostaque

The first 50 minutes are definitely worth listening to!

2/ Google finally shares an update on their progress on AI

Until 2022, Google was considered to be at the forefront of advancements in AI. With the launch of Stable Diffusion, Dall-E and ChatGTP however, Google’s thought leadership and research in AI took a backseat to startups like StabilityAI and OpenAI. It’s no surprise then, that after Google reportedly issued a internal “Code Red” on the need to move faster on AI, we finally got an update last week on what they’ve been up to.

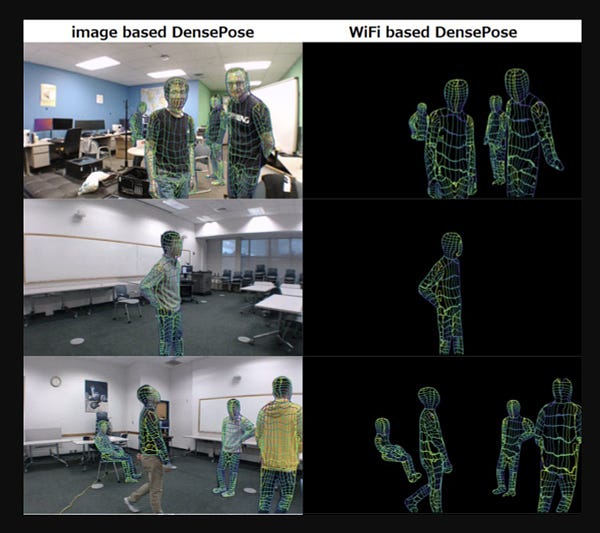

Google’s blog post was authored on behalf of their whole AI research team by Google’s most senior researcher, Jeff Dean. It covered a range of topics including their latest advancements in Large Language Models, Computer Vision, Generative AI and much more.

Dean discusses the progress and advancements in language models, specifically highlighting their work on LaMDA and PaLM, which allows for safe and high-quality dialog in natural conversations. He also mentions their work on multimodal models, which can handle multiple modalities simultaneously, both as inputs and outputs.

Dean also highlights the progress and advancements in generative models for imagery, video, and audio, mentioning specific techniques such as generative adversarial networks, diffusion models, autoregressive models, and Contrastic Language-Image Pre-training (CLIP). Finally, he emphasizes the importance of responsible AI, stating that they apply their AI Principles in practice and focus on AI that is useful and benefits users and society.

In the post, Dean also highlighted multiple times that Google was responsible for the Transformer model in 2017, which unlocked the rapid increase in AI innovation we’re seeing today. He also shared that many of the innovations that Google have made in AI are already being integrated into their existing products.

You can read the whole post here but fair warning, it’s pretty dense!

3/ OpenAI CEO debunking rumors about GPT-4

Sam Altman CEO of Open AI, debunks the rumors about GPT-4 in an interview with Connie Loizos for StrictlyVC.

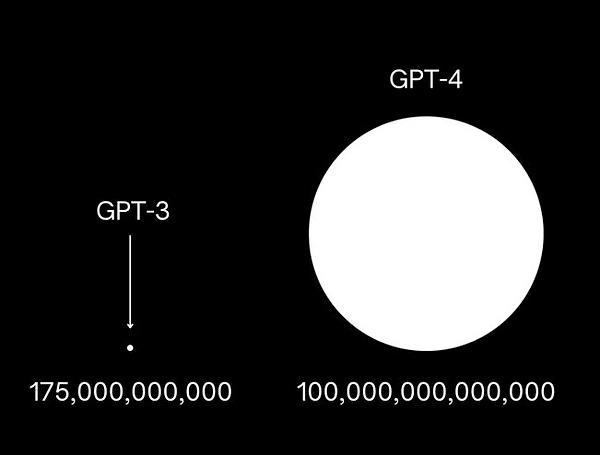

GTP-4 is the much anticipated iteration of GPT-3, the large language model that underlies ChatGPT. As I mentioned in my first post Don’t believe the hype?, there’s a lot of excitement and rumors around GPT-4 and some of the features, including a prediction that it will have 100X more parameters than GPT-3:

Sam’s response to this rumor was “People are begging to be disappointed and they will be”.

Here’s the full interview:

4/ Predictions on how Big Tech will approach AI

Ben Thompson shared his predictions for how Google, Amazon, Meta (Facebook), Microsoft and Apple in his recent post, AI and the Big Five. In the post Thompson view, AI is a new epoch in technology and he shared the following predictions on how the epoch might develop:

Apple's efforts in AI have been largely proprietary, but that the company recently received a gift from the open source community in the form of the Stable Diffusion model, which is small and efficient enough to run on consumer graphics cards and even an iPhone.

Amazon's prospects in this space will depend on factors such as the usefulness of these products in the real world and Apple's progress in building local generation techniques.

Google, who has been a leader in using machine learning for their search and consumer-facing products, may face a similar fate in the AI world to Eastman Kodak’s infamous downfall when digital cameras became popular. The shift from a mobile-first world to an AI-first world may present challenges for Google in providing the "right answer" rather than just presenting possible answers.

Meta's data centers are primarily for CPU compute, which is necessary for their services and deterministic ad model, but that the long-term solution for improving their ad targeting and measurement is through probabilistic models built by massive fleets of GPUs.

Microsoft is well-positioned in the AI space with its cloud service, Azure, that sells GPU access and its exclusive partnership with OpenAI. Microsoft is investing in the infrastructure for the AI epoch through this partnership and its Bing search engine, which has the potential to gain massive market share with the incorporation of ChatGPT-like results.

You can listen to the whole analysis here too:

5/ The first copyright lawsuit against Stable Diffusion

Jon Stokes does a great analysis of the first major lawsuit alleging that Stable Diffusion violates the copyright of artists whose images were used in training. Cases like this one and the recent copyright lawsuit against Microsoft’s Github Copilot product are likely to be pivotal in establishing how Generative AI content will be viewed in the eyes of the law.

Here’s Jon’s post:

Everything else…

Finally, in case you missed it, I also shared Part 2 of my series on the origins of Deep Learning:

That’s all for this week!